→ Proof Points

Hey {{first_name}},

I presented at the American Academy of Neurology once with over 10,000 people in attendance, and my abstract was the only one out of over 3,000 submissions that used the word "Internet."

That was just fifteen years ago.

Last week, a digital mental health company called Limbic AI published a randomized, double-blind trial in Nature Medicine claiming their AI system outperformed licensed therapists on standard CBT outcomes.

Seeing digital health evidence in the number 4 medical journal in the world is not a sentence I could have written even five years ago (and thank you to the Hemingway Report for lively discussions on whether that claim holds up!)

Something has shifted. This issue covers two things: how digital health has finally broken into mainstream science, and why that's created a new trap worth knowing about before your next publication decision.

– Paul

THE LATEST PODCAST

Ada Health's Bet on Medical Rigour

Ada Health has spent 15 years building real evidence, at significant cost, yet with results that are hard to argue with.

This week I sat down with Daniel Nathrath, founder and CEO of Ada Health, who has built a genuinely novel approach to eliminating hallucinations in AI-powered symptom assessments.

We also got into rare disease diagnostics, what the Berlin aquarium explosion taught him about resilience, and the most expensive lesson he's learned building in healthcare.

DEEP DIVE

Digital Health Has Its K-Pop Moment (Watch out)

For most of the last 25 years, “digital health” did what K-pop did (Yes, we are using this reference). K-Pop (Korean Pop music) spent decades being dismissed by the Western music industry… that is, until they created their own stage, dance styles, labels, and obsessively engaged fanbase. The industry eventually could not afford to ignore this new music style anymore.

Now let's tie this back to digital health.

In the early 2000s, a physician named Gunther Eysenbach had a problem. He kept trying to publish research about the internet in mainstream medical journals. Legitimate, rigorous studies about the internet.

But… they kept getting rejected. Not because the science was wrong, but because the editors and peer reviewers had simply thought the internet was a fad. Locked out of the mainstream, Eysenbach built his own publishing system instead, with rigorous reporting standards, conferences, and even its own peer review ecosystem.

Thus, JMIR (the Journal of Medical Internet Research) was founded. JMIR went on to spawn over 20 specialist journals, developed reporting guidelines specific to digital health studies, and created space for protocols and early-stage findings that traditional journals would have binned. For a long time, if you published in digital health, JMIR and its sister journals were your primary venues.

That has changed.

The prestige publishers have arrived. The Lancet launched Lancet Digital Health. Nature launched Nature Digital Medicine. The BMJ launched BMJ Digital Health and AI. The New England Journal of Medicine launched NEJM AI. These are purpose-built journals from institutions with global reach, staffed by people who actually understand the field.

If you'd told me 15 years ago that a digital mental health app would publish a randomised controlled trial in Nature Medicine, I'd have laughed… Because the journal simply wouldn't have entertained it.

Last week, Limbic did exactly that. A randomised, double-blind study claiming their AI clinical reasoning system outperformed licensed therapists on standard CBT outcomes; published in one of the most respected clinical journals on the planet.

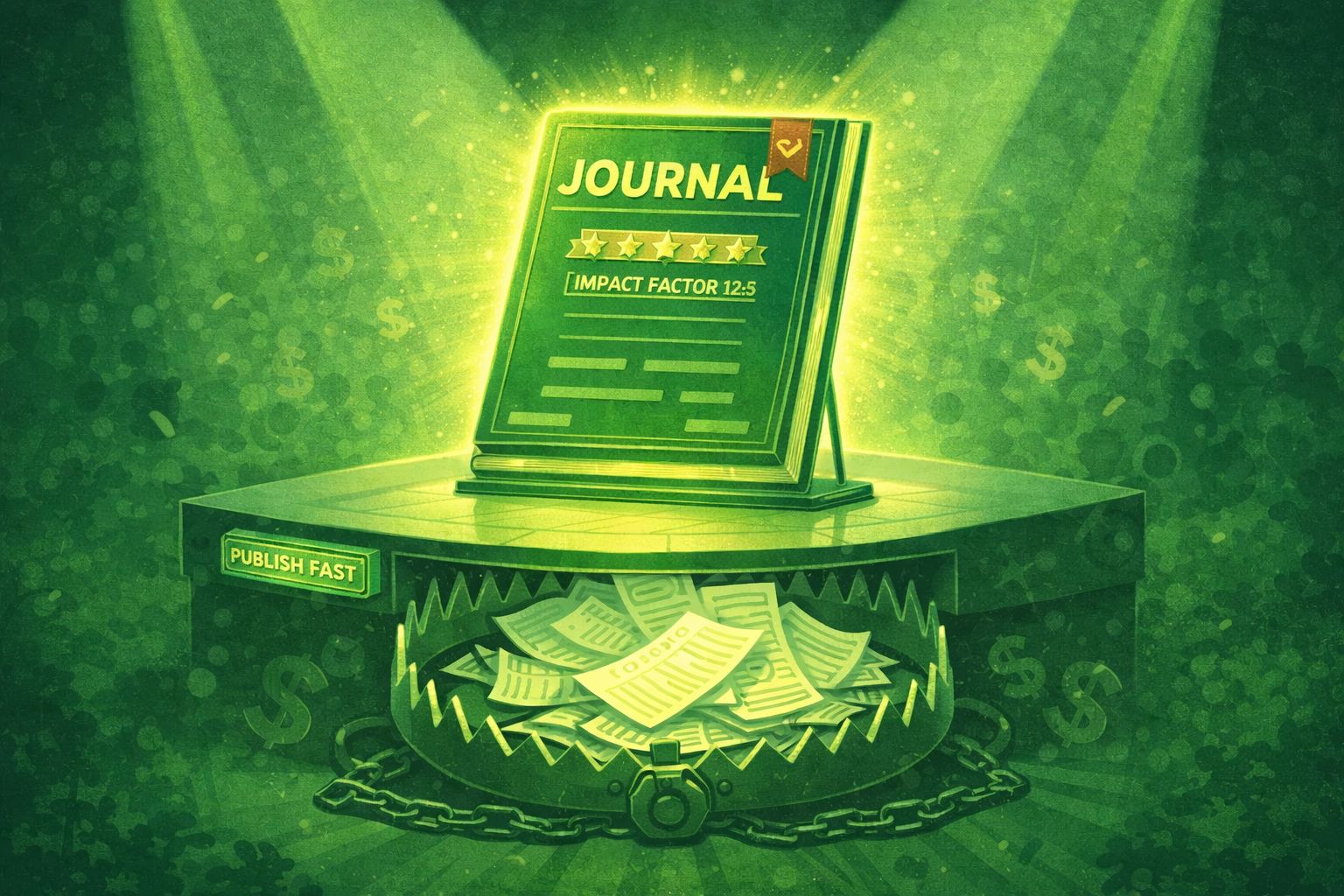

Now for the part nobody puts in their funding deck.

When a field gains prestige, it attracts people trying to exploit that prestige.

These are what we call “Predatory journals”: publications that charge article processing fees and claim peer review. Well, they are actively targeting digital health companies. These journals accept the vast majority of submissions (often >95%), sometimes list fake or unconsenting researchers on their editorial boards, and publish articles regardless of reviewer feedback. For them, every accepted article generates revenue.

📝 TIP

How to Recognize Predatory Journals

The email flatters you, promises fast publication, and the fees are buried or absent

The impact factor can't be verified, or looks like someone made it up

The editorial board lists people you can't find, or who have no relevant credentials

Recent papers read like they were written by AI and accepted regardless

They want your copyright before you've signed anything

NOTE: Publishing in these journals can hurt your company's reputation, despite the effort put into writing the paper.

In our own evidence audit survey, one in three respondents couldn't reliably identify a predatory journal. I've personally met experienced researchers who have accidentally published in one, because a friend was a special issue guest editor, the process felt fast, and the timeline pressure was real.

Publishing in one doesn't just fail to help you. It actively damages your credibility with clinicians and researchers who know the field. It's the worst possible return on what was probably a lot of work for your team.

Three ways to check before you submit. First, look at the editorial board—do you recognise anyone, and are these the kinds of people whose company you'd want professionally? Second, skim a few recent papers from the journal and watch for AI-looking writing, AI-generated graphics, and conclusions that dramatically outrun the data. Third, ask someone you know—LinkedIn, WhatsApp, a trusted colleague.

If you want to go deeper, this short video explainer is a good practical primer on spotting the warning signs.

Eminently quotable billionaire Warren Buffet puts it best: It takes 20 years to build a reputation and 5 minutes to ruin it. If you think about that, you’ll do things differently.

— Paul

One Tool Worth Bookmarking:

Directory of Open Access Journals (DOAJ) — a vetted, searchable list of legitimate peer-reviewed open access journals

FROM OUR DESK

This Month at ProofStack

I posted a teaser last week linking to the ChatGPT paper in Nature Medicine and tagged the researchers from Mount Sinai who wrote it.

One of them reposted it within the hour, with "prove things" and a little rocket ship — apparently we've made a catchphrase!

It's a small thing, but it stuck with me: the researchers publishing in these journals are watching this conversation in real time, and they want to be part of it. That felt different from anything I experienced fifteen years ago, when I was the only person who'd put the word "Internet" in a conference abstract. The field has genuinely changed.

It's a good time to be building in it properly!

ONE QUESTION

Would you trust an AI tool to triage patient symptoms if it hadn't been independently validated?

P.S. Do you know your evidence score? We created this quick quiz to help you understand your regulatory compliance level.