→ Proof Points

Welcome to Proof Points, a biweekly newsletter by ProofStack Health. This is Issue 1, and we're so glad you're here.

Every two weeks, you'll get frameworks, cautionary tales, and the kind of insider thinking that helps you prove it before you claim it. No waffle. Just the stuff that actually matters when your evidence strategy is on the line.

I've been thinking a lot about Las Vegas lately, and not just because I'm heading there for HIMSS26 in a few weeks (more on that in our Conferences section below). Las Vegas feels oddly appropriate for digital health right now. Because digital health is, in many ways, a gamble. You're betting that your product works, that your evidence holds up under scrutiny, that the FDA won't send you a letter, and that the market actually wants what you've built. Some companies play it smart. Others are pulling levers on a slot machine and hoping for the best! 🎰

This issue's deep dive is a case study in exactly that tension. I stumbled across a targeted ad for an app called Jenni this week — marketed as an AI writing tool that checks citations, but in practice it looks rather more like a tool designed to help students cheat on term papers. Which brought me neatly to a bigger question: AI, mental health, and the line between what you say you do and what you actually do.

Let's jump in!

— Paul

OUR PODCAST

Why FDA-Grade Evidence (Still) Matters

In case you missed it, last week we launched our podcast with the honorable Dr. Acacia Parks. Dr. Parks is currently the VP of Regulatory Compliance and Engineering at Avania and has a deep history in matching evidence to outcomes.

The question we're asking:

🤔 What actually counts as evidence in the Digital Health space?

DEEP DIVE

AI Is Now the Front Door to Mental Health. Does Anyone Actually Have the Evidence to Back That Up?

A few weeks ago, I was sitting in a room at the Wellcome Trust in London, facilitating a conversation with about 25 founders and chief medical officers from digital mental health companies. People who have spent years, some of them decades, building apps, platforms, and therapeutic tools.

I asked them a simple question: do they think that the number of people using AI for mental health conversations (i.e. ChatGPT, Claude, Gemini or 3rd party providers) is larger than the combined user base of every dedicated digital mental health app that has ever existed?

Every single person in the room put their hand up.

The latest numbers out of OpenAI suggest 40 million people per day are using it for health conversations. Forty million. As one of the founders in that room put it: that's more than all the other digital mental health apps have ever had. By an order of magnitude.

So here we are.

The scale is unprecedented. The evidence infrastructure is not.

I come to this with a particular lens. By training, I'm a neuropsychologist. Early in my career I was at one of the largest clinical psychology training programmes in London — we were training maybe 26, 28 people a year to become clinical psychologists. It was an extraordinarily competitive course. And even then, at one of the best institutions in the country, the gap between demand and supply was stark. We simply could not produce enough trained clinicians to meet the need.

Then I spent over a decade at PatientsLikeMe, where I watched 100,000+ people with mental health conditions support each other, track their outcomes, and share their experiences with medication. The internet was already reshaping how people accessed health information and community.

But what's happened with AI in the last two years makes that look like a dry run.

AI is now the front door to mental health. Not so long ago, the front door was Google, and before that, it was a real front door to the emergency room.

Which brings me to the "Betrayal" Anthropic Super Bowl ad.

If you haven't seen it, the premise is darkly simple. Someone asks an AI chatbot for help communicating better with their mum. The bot offers thoughtful advice: start by listening, build conversation from points of agreement, perhaps a nature walk… but then the creepily unblinking "woman” pivots to an ad for a cougar dating site “if the relationship can’t be fixed, find emotional connection with other older women on Golden Encounters.” The confused customer sits there bemused - “What?” and throws his phone away.

Scott Galloway's take on these shots fired against OpenAI was characteristically sharp. His argument was that what Anthropic understood is that the dominant, unstated use case for AI is not productivity. It's therapy. And inserting an ad into a therapy session isn't just tone-deaf, it's potentially dangerous. If someone is confessing to an AI that they're struggling, and the platform pivots to monetisation, the trust is shattered.

Although funny—forget the daft take on Ads for a second. Here's the thing that none of those ads actually addressed, and which is the question that genuinely keeps me up at night:

Where does an LLM draw the line between supportive conversation and clinical intervention?

OpenAI has actually built out what they're calling a health-specific mode. If you share your lab results with ChatGPT, it's now meant to pull you into a separate, protected conversation thread, one that doesn't feed your data back into the training model, and can, in theory, access your electronic health records with your authorisation (reports on LinkedIn suggest mixed success).

That's interesting. But the moment I start thinking about this in a mental health context, I get nervous.

There's a spectrum here. If someone tells an AI they're feeling a bit low, that’s clearly different from someone disclosing active suicidal ideation. Where does the system intervene? Where does it recommend a clinician? And if it does recommend professional help... is that a genuine clinical response, or is it a liability shield? I'm sitting this particular OpenAI release out.

The people who I have worked with who have built validated therapeutic interventions are now staring down the barrel of something that is essentially free, integrated into the devices people already use, and already being accessed at a scale they cannot compete with. They will not win a user acquisition battle against OpenAI. So the question becomes:

What is the actual role of the evidence-based digital mental health ecosystem in a world where AI has become the default first point of contact?

I don't have a clean answer. But I have a strong suspicion that the companies who will survive—and thrive—are the ones who lean into evidence rather than away from it. Not because the regulators will force them to (though they might), but because trust, once broken at scale, is extraordinarily hard to rebuild. Especially when the thing people trusted was supposed to be listening.

The proof point here is simple:

If your product sits at the intersection of AI and mental health, the question isn't whether AI will eat your lunch. It probably will, at the user acquisition level. The question is whether you have the evidence to differentiate, to partner with AI platforms as a validated modality, to say to a health system or a payer: "When the AI correctly identifies someone who needs structured intervention, here's the best next step — and here's how we prove this is the safest path."

That's not a crisis for evidence-based digital health. That's actually an opportunity. But only if you've done the work.

— Paul

UPCOMING CONFERENCES

What We're Attending

I'm heading to Basel for health.tech March 2nd - 5th, and then its straight off to HIMSS26 in Las Vegas March 9th - 12th. This will be my first time at HIMSS and I am definitely bringing my partner with me and taking a selfie at Cirque.

I did ask around before committing to HIMMS. One person I deeply respect in this space told me: "It's for the health IT old guard. Not sure startups feel that welcome there." That's exactly the kind of comment that makes me want to go and see for myself.

So I'll be on the expo floor with live video updates on LinkedIn, followed by a full writeup here.

Will I see you there? Reply to me here! There might even be a secret party on the Tuesday…

FROM OUR DESK

This Month at ProofStack

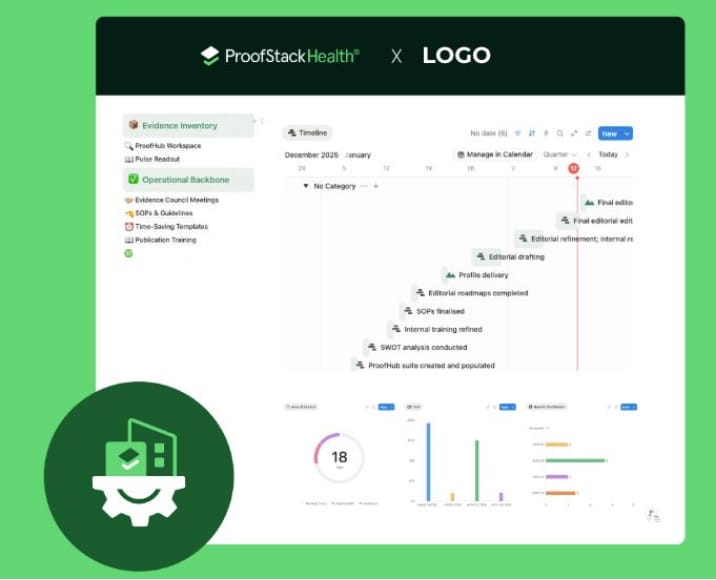

We've been automating major parts of our business: specifically the scientific benchmarking process we call Pulse.

The goal is to make our evidence audits more rigorous and repeatable.

We're automating SOP templates, SWOT analyses and editorial drafting along with various baseline timelines throughout the year.

Last week I was lucky enough to attend the inaugural meetup of the London group of The Hemingway Report, the leading online community for digital mental health leaders. Helmed by super-maven expat and all-round legend Steve Duke, this newsletter and Slack group made the tricky conversion to IRL at Caravan in King's Cross.

Before the starters had landed the hot takes were flying fast: "the research team is really just an extension of the marketing function", "Europe is the only bloc that can block ChatGPT's dominance of unregulated mental health slop masquerading as care", and "tools co-created with patients are the only interventions that have the moral authority to be taken seriously." Great fun!

Congrats to Steve for creating such a high-trust high-agency group and it was thrilling to be at the birth of what I'm sure will be a bold force in digital mental health!

ONE QUESTION

What would you most like to see in Proof Points?

P.S. Do you know your evidence score? We created this quick quiz to help you understand your regulatory compliance level.